In Philippine education markets, products often fail not from weak content but from poor contextual fit and usability. Structured product feedback surveys surface real classroom realities, guiding academic service optimization across diverse institutions.

-

Outcome-linked feedback design

Surveys connect product experience to learning outcomes, retention, and progression, ensuring feedback reflects academic impact rather than superficial satisfaction or isolated feature preferences for institutions and policymakers.

-

Role-segmented respondent capture

Instruments differentiate students, parents, teachers, and administrators, enabling role-specific perspectives on usability, pedagogy alignment, support quality, and institutional adoption constraints across public and private institutions.

-

Lifecycle-stage measurement

Feedback spans discovery, onboarding, active use, assessment, and renewal stages, revealing friction points across the academic service lifecycle rather than single-moment impressions over time and cohorts.

-

Curriculum and standards alignment

Questions evaluate content relevance to national curricula, accreditation requirements, and exam frameworks, ensuring product fit within Philippine education compliance and teaching realities across subjects and grades.

-

Usability and access equity

Instruments assess interface clarity, device compatibility, connectivity tolerance, language accessibility, and assistive features, reflecting diverse Philippine school infrastructure and learner needs including remote and low-resource schools nationwide across.

-

Quant-qual triangulation

Structured ratings integrate with open responses and task observations, strengthening diagnosis of learning barriers, feature gaps, and service delivery weaknesses affecting educational effectiveness.

-

Actionable prioritization outputs

Results translate into improvement roadmaps, segment-specific fixes, and evidence-based feature investments, enabling providers to optimize academic services with measurable outcome gains aligned with institutional budgets.

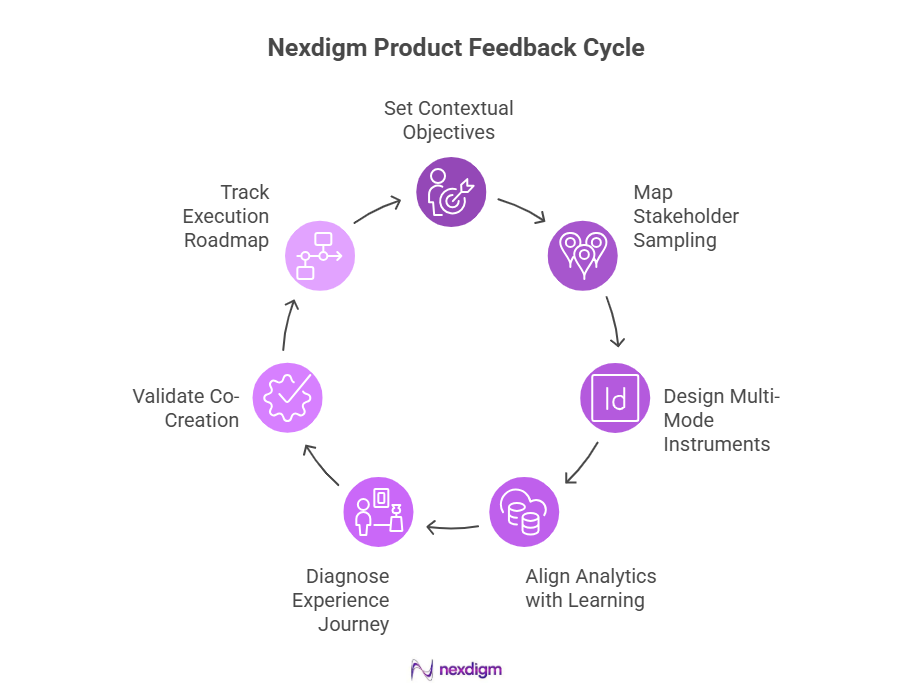

Nexdigm Product Feedback Consumer Survey Framework

Turning diverse education feedback into reliable product decisions requires structured design, representative capture, and learning-linked analytics. Nexdigm’s approach aligns Philippine institutional contexts with measurable academic service improvement and scalable deployment outcomes.

-

Contextual objective setting

Engagement begins with institution goals, learner segments, and product scope, defining decision-linked questions and success metrics tailored to Philippine education service conditions with regulatory and curriculum alignment.

-

Stakeholder-mapped sampling

Sampling frames cover students, parents, teachers, administrators, and channel partners, ensuring representative coverage across regions, school types, and delivery modalities including public and private institutions.

-

Multi-mode instrument design

Surveys deploy mobile, web, and assisted formats with localized language, accessibility, and low-bandwidth optimization to maximize response quality in diverse Philippine settings across varied connectivity environments nationwide.

-

Learning-aligned analytics

Models link satisfaction, usage, and feature perceptions to engagement, completion, and achievement indicators, isolating drivers of academic service performance and product value for subject and grade level differentiation.

-

Experience journey diagnostics

Analysis maps discovery to renewal journeys, quantifying friction, support gaps, and adoption barriers across onboarding, classroom integration, assessment workflows, and teacher enablement across institutions.

-

Co-creation validation loops

Findings feed workshops with educators and product teams, testing solutions, prioritizing fixes, and validating improvements before scaled academic service deployment through iterative pilot cycles.

-

Execution roadmap and tracking

Outputs convert into phased plans, KPIs, and dashboards, enabling institutions and providers to monitor gains in adoption, learning outcomes, and service efficiency at course program.

Nexdigm Case

A Philippine edtech provider partnered with Nexdigm to evaluate feedback on a blended learning platform across 120 schools. Surveys covering students, teachers, and administrators revealed onboarding friction and curriculum misalignment. Post-optimization, active usage rose 34%, teacher satisfaction improved 27%, and renewal intent reached 82%. Institutions reported measurable gains in lesson completion consistency and reduced support requests within two academic terms.

To take the next step, simply visit our Request a Consultation page and share your requirements with us.

Harsh Mittal

+91-8422857704