Market Overview

India AI servers and GPU hardware market is valued at approximately USD ~ billion based on recent infrastructure deployment disclosures from IDC and national digital infrastructure procurement records. Demand is driven by hyperscale GPU cloud region expansion, sovereign AI compute programs, and enterprise AI adoption across financial services, telecom, manufacturing, and digital platforms. Large language model training, analytics acceleration, and AI-as-a-service offerings are increasing deployment of GPU-dense servers and accelerator hardware across domestic data center ecosystems.

Bengaluru, Hyderabad, Mumbai, and Chennai dominate deployment due to hyperscale data center concentration, semiconductor design ecosystems, and strong enterprise IT spending environments. These cities host major cloud regions, colocation hubs, and AI research clusters enabling dense GPU infrastructure installation. India also benefits from accelerator imports and technology partnerships with the United States, Taiwan, and South Korea, whose semiconductor supply chains support domestic AI server integration and large-scale deployment across metropolitan digital infrastructure hubs.

Market Segmentation

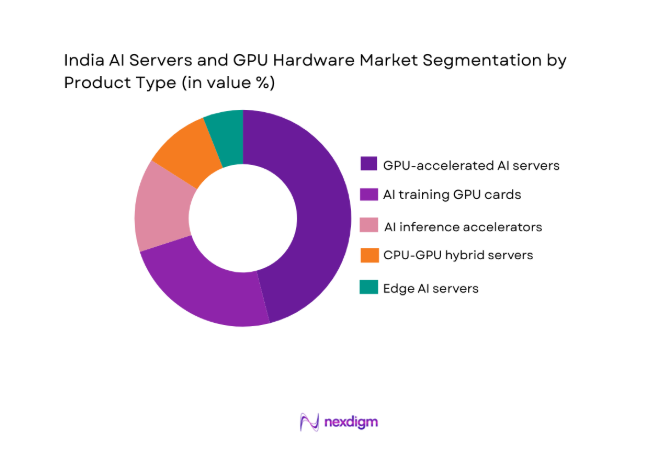

By Product Type

India AI Servers and GPU Hardware market is segmented by product type into GPU-accelerated AI servers, AI training GPU cards, AI inference accelerators, CPU-GPU hybrid servers, and edge AI servers. Recently, GPU-accelerated AI servers has a dominant market share due to factors such as hyperscale AI training workloads, sovereign GPU cluster deployments, and enterprise generative AI adoption. Large language model training and high-performance analytics require tightly integrated multi-GPU server architectures with high-bandwidth interconnects and memory, favoring rack-scale GPU servers over discrete accelerators. Hyperscale and government compute programs procure complete GPU server racks for performance density and energy efficiency, reinforcing segment dominance.

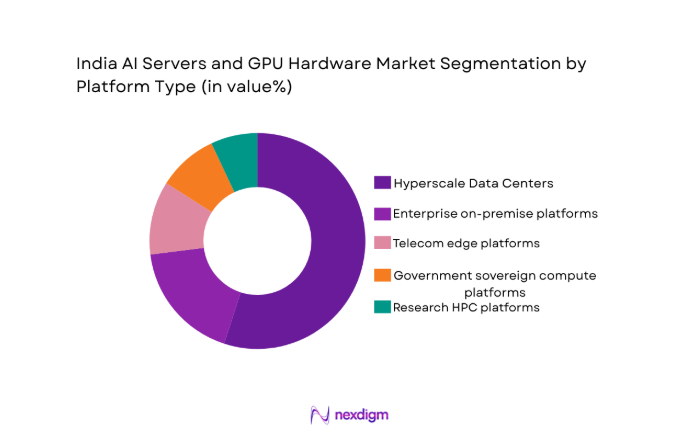

By Platform Type

India AI Servers and GPU Hardware market is segmented by platform type into hyperscale data center platforms, enterprise on-premise platforms, telecom edge platforms, government sovereign compute platforms, and research HPC platforms. Recently, hyperscale data center platforms has a dominant market share due to factors such as large-scale GPU cluster installations, AI cloud commercialization, and dense rack infrastructure availability. Hyperscale providers deploy thousands of GPUs per facility to deliver AI cloud services and enterprise workloads, resulting in far higher procurement volumes than other platforms. Continuous expansion of domestic GPU cloud regions further concentrates hardware deployment within hyperscale data centers.

Competitive Landscape

The India AI servers and GPU hardware market is moderately consolidated, with global accelerator vendors and server OEMs controlling high-performance GPU supply while domestic integrators participate in system assembly and deployment. NVIDIA’s CUDA ecosystem dominance shapes accelerator standards, while AMD and Intel compete in alternative GPU and AI accelerator architectures. Hyperscale procurement agreements and sovereign compute programs strongly influence vendor positioning, and localization initiatives are gradually expanding domestic integration participation.

| Company Name | Establishment Year | Headquarters | Technology Focus | Market Reach | Key Products | Revenue | AI Accelerator Architecture |

| NVIDI

A |

1993 | USA | ~ | ~ | ~ | ~ | ~ |

| Advanced Micro Devices | 1969 | USA | ~ | ~ | ~ | ~ | ~ |

| Intel | 1968 | USA | ~ | ~ | ~ | ~ | ~ |

| Hewlett Packard Enterprise | 2015 | USA | ~ | ~ | ~ | ~ | ~ |

| Dell Technologies | 1984 | USA | ~ | ~ | ~ | ~ | ~ |

India AI Servers and GPU Hardware Market Analysis

Growth Drivers

National Sovereign AI Compute Infrastructure Expansion

India is rapidly investing in sovereign AI compute capacity through national AI missions, public supercomputing programs, and government-supported GPU clusters deployed in domestic data centers to support indigenous AI development across language models, healthcare analytics, agriculture intelligence, and public digital services sectors. These initiatives require large-scale procurement of high-performance GPU servers with advanced interconnect and memory architectures capable of training large models on domestic datasets, reducing reliance on foreign cloud GPU capacity and strengthening national technological autonomy. Government cloud platforms and digital public infrastructure projects increasingly integrate AI services such as document intelligence, video analytics, and predictive governance systems, all of which demand parallel GPU acceleration beyond conventional CPU servers. Sovereign compute funding also supports academic and research institutions installing GPU supercomputing clusters for AI research and national innovation programs, further expanding installed AI hardware base. Localization incentives encourage domestic assembly of AI servers, allowing government procurement to prioritize locally integrated GPU systems, which increases domestic deployment scale. Public sector adoption across surveillance analytics, smart city operations, and citizen service platforms adds sustained GPU server demand across ministries and state digital initiatives. National broadband and data center expansion policies enable distributed deployment of AI infrastructure across multiple regions rather than only metropolitan hubs. Startup ecosystems and AI innovation centers accessing sovereign compute platforms further increase utilization of installed GPU capacity, justifying additional procurement cycles. Continuous growth of AI model complexity and dataset scale requires progressively denser GPU clusters, reinforcing sustained demand for AI servers across sovereign infrastructure programs.

Hyperscale GPU Cloud Region Expansion and Enterprise AI Adoption

Global and domestic hyperscale cloud providers are aggressively deploying GPU-enabled data center regions across India to capture enterprise demand for generative AI, machine learning, and advanced analytics delivered through cloud platforms and AI-as-a-service offerings. Enterprises across banking, e-commerce, manufacturing, telecom, and media sectors are integrating AI into fraud detection, personalization, predictive maintenance, and content generation workflows, increasing utilization of cloud GPU clusters and prompting hyperscalers to install additional AI server racks to maintain performance and availability. Cloud providers differentiate through specialized GPU instances, high-bandwidth networking fabrics, and integrated AI software stacks requiring dense deployment of advanced GPU accelerators across multiple availability zones. Subscription-based AI infrastructure consumption shifts GPU hardware ownership toward hyperscalers, resulting in bulk procurement contracts that significantly expand installed AI server capacity within Indian data centers. Enterprises with data sovereignty or latency requirements adopt hybrid AI infrastructure combining on-premise GPU clusters with cloud bursting capability, increasing overall hardware demand across both segments. Telecom operators deploying edge AI services for 5G analytics and video processing integrate GPU-accelerated servers into network infrastructure, adding distributed GPU deployment layers. Hyperscalers also partner with government and research institutions to host national AI platforms and supercomputing workloads domestically, further increasing local GPU installations. Rapid growth in AI model size and training complexity increases GPU density per cluster, meaning each deployment requires more accelerators than prior generations. These combined hyperscale expansion and enterprise adoption dynamics are structurally accelerating AI server procurement across India’s digital infrastructure landscape.

Market Challenges

Dependence on Imported Advanced GPU Accelerators and Semiconductor Supply Constraints

India’s AI servers and GPU hardware ecosystem relies heavily on imported advanced accelerators and high-bandwidth memory produced primarily in the United States, Taiwan, and South Korea, creating structural supply vulnerability and cost exposure for domestic deployments. Export controls and geopolitical restrictions affecting advanced AI chip trade can limit availability of high-performance GPUs required for large model training and advanced analytics workloads, constraining timelines for sovereign and hyperscale AI infrastructure expansion. Global demand concentration among hyperscalers in major markets often prioritizes accelerator allocation away from emerging markets, extending procurement lead times and reducing hardware availability for Indian deployments. Currency volatility and import duties introduce additional cost uncertainty for AI server procurement programs that require predictable long-term budgeting. Domestic semiconductor manufacturing initiatives remain in early stages, leaving India without indigenous production of cutting-edge GPU accelerators and dependent on external ecosystems. Integration of imported GPUs into locally assembled servers also requires proprietary firmware, interconnect technologies, and software stacks controlled by global vendors, limiting technological independence. Supply disruptions or allocation prioritization toward larger regions can delay hyperscale and public AI projects in India, affecting national digital and AI adoption objectives. Lack of domestic advanced packaging and high-bandwidth memory production further constrains localization of GPU hardware systems. These structural dependencies pose persistent challenges to scaling AI server deployment at the pace required by India’s expanding AI ecosystem.

High Energy Density and Data Center Infrastructure Constraints for GPU Server Deployment

AI servers equipped with high-performance GPUs consume substantially higher electrical power and cooling capacity than conventional enterprise servers, creating significant infrastructure challenges for large-scale deployment across India’s data center landscape. Many legacy facilities were designed for lower-density IT loads and require extensive electrical upgrades, advanced cooling systems, and redesigned rack power distribution to accommodate GPU-dense clusters, increasing capital expenditure and deployment timelines. Electricity availability and regional cost variability affect site selection for AI server installations, as operators must ensure stable high-capacity power supply for continuous training workloads. GPU servers often require liquid cooling or advanced airflow architectures not widely deployed in older data centers, necessitating retrofits or new facility construction. Environmental and sustainability regulations are increasing scrutiny on data center energy consumption, requiring renewable sourcing and efficiency investments that add complexity and cost. Edge AI deployments in telecom and enterprise environments face space, power, and thermal constraints limiting GPU hardware density outside major hubs. Rapid AI workload growth increases aggregate national data center energy demand, raising grid capacity concerns. Limited availability of skilled engineers experienced in high-density AI infrastructure design further complicates deployment and maintenance. These energy and infrastructure constraints represent a significant barrier to rapid GPU server scaling nationwide.

Opportunities

Domestic AI Server Integration and Manufacturing Ecosystem Development

India has a strong opportunity to develop a domestic AI server integration ecosystem assembling imported accelerators into locally designed AI server platforms tailored to national deployment environments and procurement policies. Electronics manufacturing incentives and data center localization policies are encouraging global OEMs and domestic integrators to establish AI server assembly within India, reducing reliance on imported complete systems and shortening deployment cycles. Local integration enables customization for regional power conditions, telecom edge deployments, and government data center requirements, increasing adoption across sectors. Domestic production also supports public procurement preferences for locally manufactured IT hardware, enabling integrators to capture sovereign AI infrastructure contracts. Partnerships between global accelerator vendors and Indian electronics manufacturers can transfer thermal design and system integration expertise into domestic industry. Expanding enterprise AI adoption across industries will increase demand for customized on-premise GPU clusters assembled locally. Development of domestic supply chains for chassis, cooling systems, and power modules can further localize value addition even while accelerators remain imported. Export potential exists for AI servers assembled in India for emerging markets with similar cost and deployment requirements. Establishing this ecosystem can strengthen national technological capability and capture growing regional AI hardware demand.

Edge AI Infrastructure Deployment Across Telecom, Smart Cities, and Industrial Automation

Expansion of 5G networks, smart city programs, and industrial digitalization initiatives across India creates major opportunity for distributed edge AI server deployment beyond centralized hyperscale data centers. Telecom operators require GPU-accelerated edge servers for video analytics, network optimization, and low-latency AI services within base stations and regional switching facilities. Smart city surveillance, traffic management, and public safety systems generate continuous video streams requiring local AI processing, driving demand for compact GPU hardware across municipal infrastructure. Manufacturing and logistics sectors adopting Industry 4.0 automation rely on machine vision and predictive analytics executed at factory edge locations, creating additional GPU deployment needs. Edge AI reduces latency and bandwidth costs compared to centralized processing, making it economically viable for real-time applications. Government digital infrastructure programs increasingly incorporate edge AI hardware procurement across multiple cities. Local integrators and telecom equipment vendors can develop specialized rugged GPU servers optimized for power efficiency and environmental resilience. Distributed AI infrastructure also supports data sovereignty by enabling local processing within domestic networks. Convergence of telecom, urban, and industrial digitalization thus represents a major opportunity for expanding AI server deployment across India’s physical infrastructure.

Future Outlook

India AI servers and GPU hardware market is expected to expand steadily as hyperscale GPU cloud regions, sovereign AI compute programs, and enterprise AI adoption intensify across industries. Localization of AI server integration and supportive data center policies will accelerate domestic deployment capacity. Advances in accelerator efficiency and cooling will enable denser GPU installations. Telecom edge AI and industrial automation deployments will broaden demand beyond hyperscale hubs.

Major Players

Key Target Audience

Research Methodology

Step 1: Identification of Key Variables

AI infrastructure demand drivers including hyperscale GPU deployment, sovereign compute programs, enterprise AI adoption, and semiconductor supply constraints were mapped. Hardware categories, deployment platforms, and end-user segments were defined to structure the market model.

Step 2: Market Analysis and Construction

Data center capacity additions, accelerator shipment data, OEM disclosures, and procurement records were triangulated to estimate installed AI server and GPU hardware value. Segment shares were derived from deployment patterns across hyperscale, enterprise, and public infrastructure.

Step 3: Hypothesis Validation and Expert Consultation

Insights from data center operators, server OEMs, semiconductor suppliers, and AI infrastructure specialists validated assumptions on deployment scale, platform distribution, and technology trends. Infrastructure constraints and procurement cycles were cross-checked.

Step 4: Research Synthesis and Final Output

Validated quantitative and qualitative inputs were synthesized into segmentation, competitive landscape, and outlook narratives. Consistency checks ensured alignment between technology adoption, infrastructure expansion, and demand drivers across India.

- Executive Summary

- Research Methodology (Definitions, Scope, Industry Assumptions, Market Sizing Approach, Primary & Secondary Research Framework, Data Collection & Verification Protocol, Analytic Models & Forecast Methodology, Limitations & Research Validity Checks)

- Market Definition and Scope

- Value Chain & Stakeholder Ecosystem

- Regulatory / Certification Landscape

- Sector Dynamics Affecting Demand

- Strategic Initiatives & Infrastructure Growth

- Growth Drivers

National AI Compute Mission and Sovereign GPU Cluster Expansion

Hyperscale Cloud GPU Region Proliferation Across Indian Data Centers

Enterprise AI Adoption Across BFSI, Telecom, and Manufacturing Sectors

5G and Edge AI Infrastructure Deployment by Telecom Operators

Semiconductor and Electronics Manufacturing Localization Incentives - Market Challenges

Dependence on Imported Advanced GPUs and Semiconductor Supply Risks

High Power Density and Cooling Infrastructure Constraints in Data Centers

Export Controls and Geopolitical Restrictions on AI Accelerator Access

High Capital Cost of Large-Scale GPU Cluster Deployment

Limited Domestic Expertise in AI Hardware Integration and Maintenance - Market Opportunities

Domestic AI Server Assembly and Integration Ecosystem Development

Edge AI Infrastructure Deployment in Smart Cities and Industrial IoT

Public Sector AI Platforms and National Supercomputing Expansion - Trends

Transition Toward GPU-Dense Rack-Scale AI Infrastructure

Adoption of Liquid Cooling for High-Performance AI Servers

Growth of AI-as-a-Service GPU Cloud Offerings

Integration of AI Accelerators in Telecom Edge Networks

Shift Toward Sovereign and Localized AI Compute Capacity - Government Regulations & Defense Policy

National Data Localization and Sovereign Cloud Infrastructure Policies

Semiconductor and Electronics Manufacturing Incentive Schemes

AI Supercomputing and National Digital Infrastructure Programs - SWOT Analysis

- Stakeholder and Ecosystem Analysis

- Porter’s Five Forces Analysis

- Competition Intensity and Ecosystem Mapping

- By Market Value, 2020-2025

- By Installed Units, 2020-2025

- By Average System Price, 2020-2025

- By System Complexity Tier, 2020-2025

- By System Type (In Value%)

GPU-Accelerated AI Training Servers

AI Inference Accelerator Servers

CPU-GPU Hybrid Compute Servers

Edge AI Servers

High Performance AI Supercomputing Systems - By Platform Type (In Value%)

Hyperscale Data Center Platforms

Enterprise On-Premise AI Platforms

Telecom Edge AI Platforms

Government Sovereign Compute Platforms

Research and Academic HPC Platforms - By Fitment Type (In Value%)

Rack-Scale Integrated AI Systems

Blade AI Server Modules

Standalone GPU Accelerator Cards

Preconfigured AI Appliance Systems

Edge-Optimized Rugged AI Nodes - By End User Segment (In Value%)

Hyperscale Cloud Providers

Enterprise Data Center Operators

Government and Public Sector Agencies

Telecom Network Operators

Research and Academic Institutions

- Market structure and competitive positioning

Market share snapshot of major players - Cross Comparison Parameters (GPU Architecture, Server Form Factor, Interconnect Technology, Cooling Method, Deployment Platform, Target Workload, Power Density, Memory Bandwidth, Integration Model)

- SWOT Analysis of Key Competitors

- Pricing & Procurement Analysis

- Key Players

NVIDIA

Advanced Micro Devices

Intel

Hewlett Packard Enterprise

Dell Technologies

Super Micro Computer

Lenovo

Cisco Systems

Inspur Information

ASUS

Gigabyte Technology

Quanta Computer

Wistron

Foxconn

Tata Electronics

- Hyperscale cloud providers expanding GPU clusters across multiple regions

- Enterprises adopting on-premise AI infrastructure for data sovereignty needs

- Government agencies deploying sovereign AI compute and analytics platforms

- Telecom operators integrating edge AI servers within 5G networks

- Forecast Market Value, 2026-2035

- Forecast Installed Units, 2026-2035

- Price Forecast by System Tier, 2026-2035

- Future Demand by Platform, 2026-2035