Market Overview

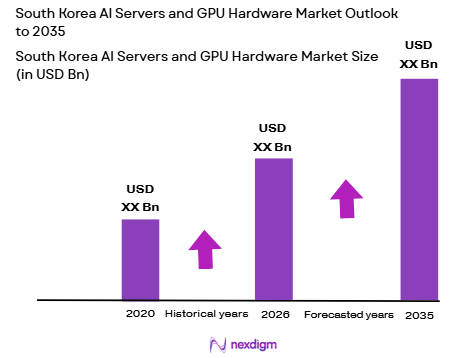

South Korea AI servers and GPU hardware market is valued at approximately USD ~ billion based on a recent historical assessment, driven by accelerated hyperscale data center investments, enterprise AI adoption, and national semiconductor leadership. Government-backed AI infrastructure programs and strong domestic chip manufacturing ecosystems support high-performance server deployments. Expanding generative AI workloads across telecom, manufacturing, and finance sectors further elevate demand for GPU-accelerated computing systems and specialized AI server architectures across enterprise and cloud environments.

Seoul metropolitan region dominates the South Korea AI servers and GPU hardware market due to concentration of hyperscale data centers, semiconductor fabrication clusters, and leading technology conglomerates. Gyeonggi Province hosts major chip foundries and advanced packaging facilities that anchor AI hardware supply chains. Busan and Incheon are emerging infrastructure hubs supported by subsea connectivity and logistics advantages. National digital transformation initiatives and proximity to large enterprise customers reinforce regional leadership in AI computing infrastructure deployment and innovation.

Market Segmentation

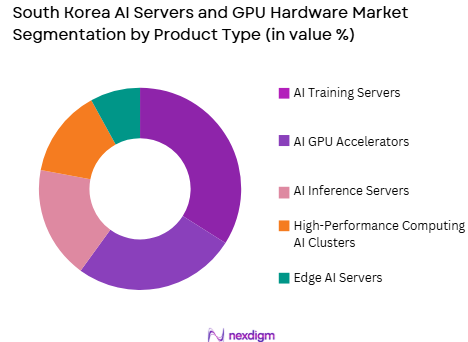

By Product Type

South Korea AI servers and GPU hardware market is segmented by product type into AI GPU accelerators, AI training servers, AI inference servers, edge AI servers, and high-performance computing AI clusters. Recently, AI training servers have a dominant market share due to factors such as hyperscale model development, domestic semiconductor integration, advanced cooling infrastructure availability, and enterprise demand for large-scale model training capabilities. National AI initiatives emphasize sovereign AI model creation, which requires dense GPU training architectures. Leading Korean technology firms deploy large training clusters to support language, robotics, and industrial AI applications, sustaining higher procurement volumes for training servers compared with inference or edge systems.

By End-Use Industry

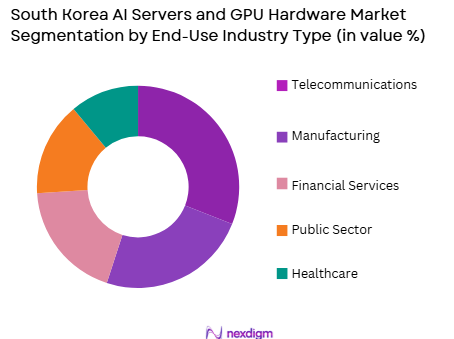

South Korea AI servers and GPU hardware market is segmented by end-use industry into telecommunications, manufacturing, financial services, healthcare, and public sector. Recently, telecommunications has a dominant market share due to factors such as nationwide 5G and AI network optimization programs, cloud-native telecom platforms, and large-scale subscriber analytics workloads. Telecom operators deploy GPU-based AI infrastructure for network automation, edge computing, and real-time data processing. Integration of AI into core network operations and customer experience platforms increases server density requirements, making telecom the largest infrastructure buyer among industries adopting AI hardware in South Korea.

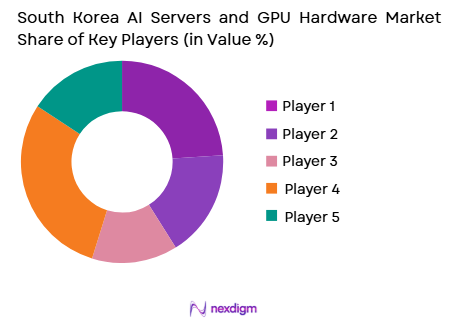

Competitive Landscape

The South Korea AI servers and GPU hardware market shows moderate consolidation with strong influence from global GPU vendors and domestic electronics conglomerates. Partnerships between semiconductor manufacturers, cloud providers, and server OEMs shape competitive positioning. Local firms integrate domestically produced memory and advanced packaging with imported GPUs, while global players maintain leadership in accelerator architecture. Strategic alliances with telecom operators and hyperscalers determine large contract allocations and infrastructure deployment scale.

| Company Name | Establishment Year | Headquarters | Technology Focus | Market Reach | Key Products | Revenue | Domestic Integration Capability |

| Samsung Electronics | 1969 | Suwon, South Korea | ~ | ~ | ~ | ~ | ~ |

| SK hynix | 1983 | Icheon, South Korea | ~ | ~ | ~ | ~ | ~ |

| NVIDIA | 1993 | Santa Clara, USA | ~ | ~ | ~ | ~ | ~ |

| Dell Technologies | 1984 | Texas, USA | ~ | ~ | ~ | ~ | ~ |

| HPE | 1939 | Texas, USA | ~ | ~ | ~ | ~ | ~ |

South Korea AI Servers and GPU Hardware Market Analysis

Growth Drivers

National Sovereign AI Infrastructure Investment Programs

South Korea’s strategic commitment to sovereign artificial intelligence capability is accelerating procurement of domestic AI servers and GPU hardware, supported by coordinated public and private sector funding initiatives. Government digital infrastructure policies prioritize localized AI compute capacity to reduce dependence on foreign cloud providers and ensure data governance control. Public sector AI projects across defense, language modeling, healthcare analytics, and smart cities require high-density GPU clusters integrated with domestic semiconductor components. Major conglomerates align capital expenditure with national AI roadmaps, expanding hyperscale training facilities and enterprise AI platforms. Subsidies and tax incentives encourage local manufacturing of AI servers using domestic memory and packaging technologies. Telecom and cloud operators co-invest in national AI data centers to host foundational models and public AI services. Research institutions deploy exascale-class GPU systems for scientific and industrial AI applications. Policy emphasis on semiconductor value chain resilience strengthens demand for domestically assembled AI infrastructure. These structural investments collectively sustain high baseline demand for AI servers and GPU hardware across sectors and regions within the national technology ecosystem.

Hyperscale Data Center Expansion and Generative AI Workload Growth

Rapid expansion of hyperscale data centers in South Korea is driving substantial deployment of GPU-accelerated AI servers to support generative AI training and inference workloads across industries. Cloud providers and telecom operators are scaling AI infrastructure capacity to host large language models, recommendation systems, and autonomous control algorithms. Enterprise adoption of generative AI in manufacturing automation, robotics, finance analytics, and customer engagement increases computational intensity per workload. GPU clusters with advanced interconnects and high-bandwidth memory are essential for parallel training and real-time inference at scale. Rising data localization requirements incentivize domestic hosting of AI models, further expanding local data center capacity. Edge-cloud integration in smart factories and 5G networks increases distributed AI server deployment. Power and cooling innovations enable denser GPU racks within existing facilities, raising hardware procurement volumes. Strategic partnerships between GPU vendors and Korean OEMs accelerate system availability. Continuous growth of AI service consumption across digital platforms sustains long-term demand for high-performance AI servers and accelerator hardware nationwide.

Market Challenges

Dependence on Foreign GPU Architectures and Export Controls

South Korea’s AI server ecosystem relies heavily on imported GPU accelerators, creating exposure to geopolitical export controls and supply restrictions affecting availability of advanced AI chips. Leading GPU architectures originate from foreign vendors, limiting domestic control over performance roadmaps and pricing structures. Trade policy uncertainties can delay procurement cycles and disrupt hyperscale infrastructure planning. Local server manufacturers must adapt system designs around externally defined GPU interfaces and software ecosystems. Supply constraints elevate hardware costs and reduce deployment flexibility for domestic AI projects. Strategic efforts to develop indigenous AI accelerators remain in early stages compared with global leaders. Enterprises face integration risks when hardware availability fluctuates due to export licensing changes. Dependence on external chip supply also affects national data sovereignty objectives. These structural vulnerabilities constrain predictable scaling of AI infrastructure across sectors. Addressing the challenge requires long-term domestic accelerator innovation and diversified sourcing strategies.

Power Density, Cooling, and Energy Sustainability Constraints in AI Data Center

High-performance GPU servers impose extreme power density and thermal loads that challenge South Korea’s data center infrastructure and energy sustainability goals. AI training clusters consume substantial electricity, stressing urban power grids where most facilities are concentrated. Advanced cooling solutions such as liquid immersion and direct-to-chip cooling increase capital costs and operational complexity. Data center operators must balance AI infrastructure expansion with national carbon reduction commitments. Limited availability of suitable sites with sufficient power and cooling capacity restricts hyperscale facility growth near major cities. Energy price volatility affects operating economics of GPU-intensive workloads. Thermal management constraints also limit achievable rack density in existing facilities. Environmental regulations governing energy efficiency and heat discharge impose compliance burdens. These factors collectively slow deployment of large-scale AI server clusters. Sustainable AI infrastructure requires coordinated power, cooling, and site planning innovations.

Opportunities

Development of Domestic AI Accelerators and HBM-Integrated Server Platforms

South Korea’s leadership in advanced memory and semiconductor packaging creates opportunity to develop competitive domestic AI accelerators tightly integrated with high-bandwidth memory and server platforms. Local chip manufacturers can leverage expertise in HBM and 3D packaging to design specialized AI processors optimized for training and inference workloads. Integration of domestic accelerators into locally assembled AI servers reduces reliance on foreign GPU suppliers and strengthens technology sovereignty. Government semiconductor initiatives support research, fabrication, and ecosystem development for AI chips. Collaboration between universities, foundries, and electronics firms accelerates architecture innovation. Domestic AI accelerators tailored to national language and industrial workloads can differentiate performance. Packaging integration with memory stacks improves energy efficiency and throughput. Export potential exists for regionally competitive AI server solutions. Success in this area would reshape competitive positioning of Korean hardware vendors globally. The opportunity aligns with national semiconductor strategy and AI infrastructure demand.

AI Infrastructure Export and Regional Data Center Partnerships in Asia

South Korea’s advanced semiconductor and electronics ecosystem enables expansion of AI server and GPU hardware exports to emerging AI infrastructure markets across Asia. Regional economies are investing in data centers and AI compute capacity but lack domestic hardware manufacturing capability. Korean firms can supply integrated AI server platforms combining memory, packaging, and system engineering expertise. Strategic partnerships with telecom and cloud operators in Southeast Asia and Middle East enable overseas deployment of Korean AI infrastructure. Export-oriented manufacturing scales domestic production volumes and reduces unit costs. Participation in international AI data center projects strengthens global market presence. Cross-border AI cloud collaborations expand demand for Korean hardware. Government trade agreements and technology diplomacy support regional expansion. This opportunity diversifies revenue beyond domestic demand. Korean AI hardware vendors can position as reliable suppliers for sovereign AI initiatives globally. Expansion of regional AI ecosystems sustains long-term export growth potential.

Future Outlook

South Korea AI servers and GPU hardware market is expected to expand steadily as sovereign AI strategies, hyperscale data center construction, and enterprise generative AI adoption accelerate infrastructure demand. Advances in domestic semiconductor packaging and memory integration will support local AI hardware innovation. Energy-efficient cooling and power solutions will enable denser GPU deployments. Regulatory focus on data localization and AI competitiveness will sustain national investment in AI compute capacity.

Major Players

- Samsung Electronics

- SK hynix

- NVIDIA

- Dell Technologies

- Hewlett Packard Enterprise

- Supermicro

- Lenovo

- Cisco Systems

- LG Electronics

- KT Corp

- SK Telecom

- Rebellions

- FuriosaAI

- KTNF

- Gigabyte

Key Targets

- Hyperscale cloud providers

- Telecommunications operators

- Semiconductor manufacturers

- Data center operators

- AI software platform companies

- Investments and venture capitalist firms

- Government and regulatory bodies

- Industrial automation enterprises

Research Methodology

Step 1: Identification of Key Variables

Key variables include AI server shipments, GPU accelerator deployments, hyperscale data center capacity, semiconductor supply chain metrics, and enterprise AI adoption indicators. Variables are mapped across product, industry, and regional dimensions to define market structure.

Step 2: Market Analysis and Construction

Supply-side analysis evaluates server OEM production, semiconductor output, and GPU availability, while demand-side analysis examines hyperscale, telecom, and enterprise procurement trends. Data triangulation constructs market size and segmentation estimates.

Step 3: Hypothesis Validation and Expert Consultation

Industry experts from semiconductor firms, cloud providers, and data center operators validate assumptions on AI infrastructure growth, technology adoption, and deployment constraints. Feedback refines segmentation shares and competitive positioning.

Step 4: Research Synthesis and Final Output

Validated datasets and qualitative insights are synthesized into market forecasts, competitive analysis, and strategic outlook. Consistency checks ensure alignment across market size, segmentation, and trend narratives before final reporting.

- Executive Summary

- Research Methodology (Definitions, Scope, Industry Assumptions, Market Sizing Approach, Primary & Secondary Research Framework, Data Collection & Verification Protocol, Analytic Models & Forecast Methodology, Limitations & Research Validity Checks)

- Market Definition and Scope

- Value Chain & Stakeholder Ecosystem

- Regulatory / Certification Landscape

- Sector Dynamics Affecting Demand

- Strategic Initiatives & Infrastructure Growth

- Growth Drivers

National AI and semiconductor leadership investments

Expansion of hyperscale AI data centers and cloud services

AI adoption in automotive, robotics, and electronics industries - Market Challenges

Dependence on imported high-end GPUs and accelerators

High energy and cooling requirements of AI clusters

Data center space and power constraints in urban regions - Market Opportunities

Domestic AI supercomputing and sovereign AI clusters

AI integration in smart manufacturing and robotics

Autonomous mobility and intelligent transport AI systems - Trends

Adoption of liquid-cooled high-density GPU servers

Integration of AI accelerators across telecom and edge

Convergence of AI and semiconductor hardware ecosystems - Government regulations

National AI and semiconductor development policies

Data governance and AI ethics compliance frameworks

High-performance computing and supercomputing programs - SWOT analysis

- Porters five forces

- By Market Value, 2020-2025

- By Installed Units, 2020-2025

- By Average System Price, 2020-2025

- By System Complexity Tier, 2020-2025

- By System Type (In Value%)

GPU-Accelerated AI Servers

AI Training Supercomputers

AI Inference Servers

High-Density GPU Racks

Edge AI Servers - By Platform Type (In Value%)

Hyperscale Data Centers

Enterprise AI Infrastructure

Telecom AI Cloud Platforms

Research and Academic HPC

Autonomous Systems Compute - By Fitment Type (In Value%)

New AI Infrastructure Deployment

GPU Cluster Expansion

Accelerator Retrofit Upgrades

Modular AI Rack Integration

Cloud-Managed AI Hardware - By End User Segment (In Value%)

Cloud and Internet Companies

Telecommunications Operators

Automotive and Robotics Firms

Electronics and Semiconductor Companies

Government and Research Institutes - By Procurement Channel (In Value%)

Direct OEM Procurement

Accelerator Vendor Supply

System Integrator Deployment

Cloud Provider Procurement

Government AI Programs

- Market Share Analysis

- Cross Comparison Parameters (GPU Compute Density, Training Throughput Performance, Inference Latency Optimization, Energy Efficiency per TFLOP, Memory Bandwidth and Capacity, Interconnect Bandwidth and Topology, Cluster Scalability Architecture, Cooling and Thermal Design, AI Software Stack Compatibility, Rack Power Density, Deployment Flexibility, Sovereign AI Compliance Readiness)

- SWOT Analysis of Key Competitors

- Pricing & Procurement Analysis

- Key Players

Samsung Electronics

SK hynix

FuriosaAI

Rebellions

HyperAccel

Naver Cloud

Kakao Enterprise

KT Corporation

SK Telecom

LG CNS

Dell Technologies Korea

Hewlett Packard Enterprise Korea

Lenovo Korea

Supermicro Korea

NVIDIA Korea

- Cloud providers expanding GPU clusters for AI services

- Telecom operators deploying AI infrastructure for networks

- Automotive and robotics firms building AI compute capacity

- Government and academia investing in national AI HPC

- Forecast Market Value, 2026-2035

- Forecast Installed Units, 2026-2035

- Price Forecast by System Tier, 2026-2035

- Future Demand by Platform, 2026-2035