Market Overview

The USA AI Servers and GPU Hardware market reached approximately USD ~ billion based on a recent historical assessment, driven by hyperscale data center expansion and accelerated artificial intelligence workload deployment across cloud and enterprise environments. Large scale training and inference models require high performance GPU clusters and specialized AI servers optimized for parallel processing and memory bandwidth. Investment from hyperscale cloud providers, generative AI platforms, and enterprise digital transformation initiatives continues to expand high density compute infrastructure across the national technology ecosystem.

Primary deployment concentration is observed across Northern Virginia, Silicon Valley, Seattle, and Dallas–Fort Worth due to hyperscale data center campuses, semiconductor design hubs, and cloud provider headquarters. These regions benefit from dense fiber connectivity, power availability, and advanced engineering talent pools supporting large scale AI infrastructure operations. Secondary growth corridors in Phoenix and Columbus are emerging due to lower energy costs and data center friendly regulatory frameworks, sustaining regional expansion of AI server and GPU hardware installations.

Market Segmentation

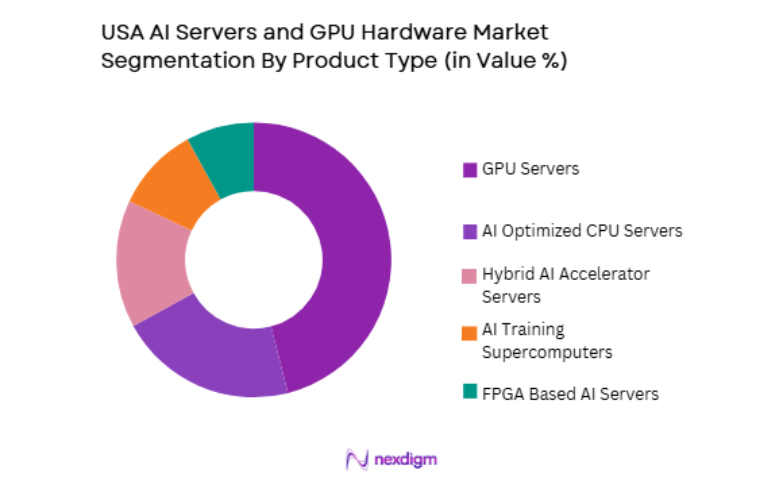

By Product Type

USA AI Servers and GPU Hardware market is segmented by product type into GPU servers, AI optimized CPU servers, FPGA based AI servers, hybrid AI accelerator servers, and AI training supercomputers. Recently, GPU servers has a dominant market share due to factors such as demand patterns, brand presence, infrastructure availability, or consumer preference. Large language models, generative AI systems, and deep learning workloads require massively parallel computation architectures that GPUs provide more efficiently than alternative accelerators. Hyperscale cloud providers deploy GPU dense racks to support AI training clusters and inference services at scale. Enterprise AI adoption across finance, healthcare, and retail increasingly depends on GPU accelerated infrastructure for analytics and automation workloads.

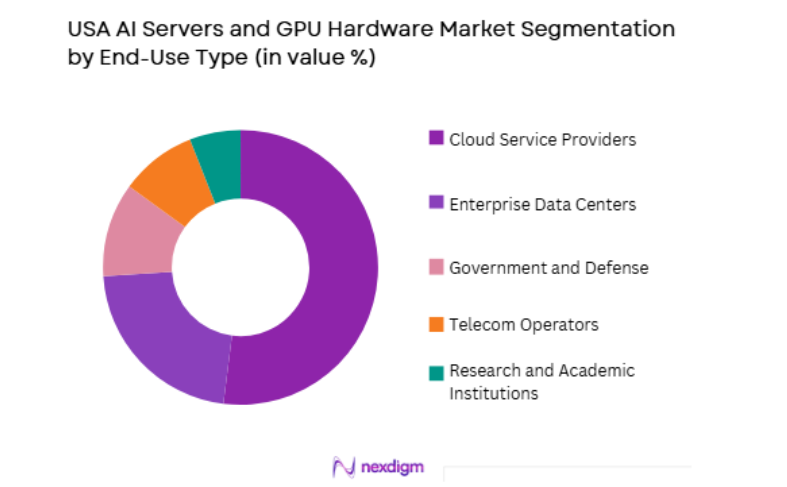

By End Use

USA AI Servers and GPU Hardware market is segmented by end use into cloud service providers, enterprise data centers, research and academic institutions, government and defense, and telecom operators. Recently, cloud service providers has a dominant market share due to factors such as demand patterns, brand presence, infrastructure availability, or consumer preference. Hyperscale cloud platforms host the majority of AI training and inference workloads for enterprises and AI developers, requiring large scale GPU clusters and AI optimized servers. Cloud providers offer on demand AI compute services, enabling scalable access to GPU infrastructure without capital investment by users. Generative AI platforms and SaaS providers deploy workloads primarily on hyperscale infrastructure.

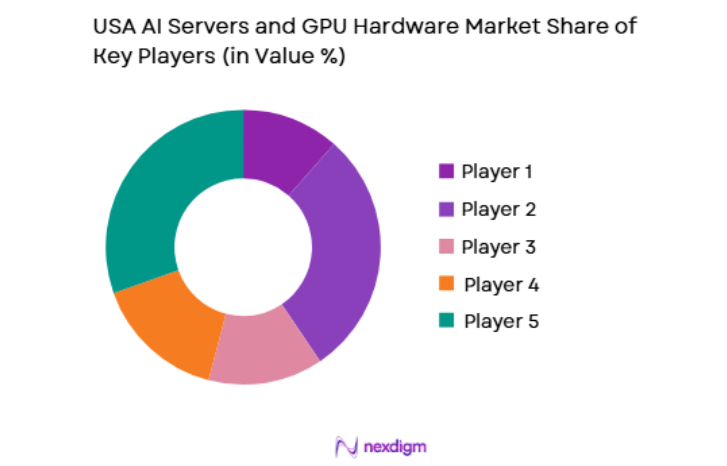

Competitive Landscape

The USA AI Servers and GPU Hardware market is highly concentrated around semiconductor leaders and hyperscale infrastructure providers that dominate accelerator design, AI server manufacturing, and large scale deployment ecosystems. Vertical integration across chip design, server systems, and AI software platforms strengthens competitive positioning. Hyperscale cloud firms act as anchor buyers shaping hardware standards, while OEM server manufacturers integrate advanced accelerators into enterprise and data center solutions.

| Company Name | Establishment Year | Headquarters | Technology Focus | Market Reach | Key Products | Revenue | AI Accelerator Type |

| NVIDIA | 1993 | Santa Clara, USA | ~ | ~ | ~ | ~ | ~ |

| AMD | 1969 | Santa Clara, USA | ~ | ~ | ~ | ~ | ~ |

| Intel | 1968 | Santa Clara, USA | ~ | ~ | ~ | ~ | ~ |

| Dell Technologies | 1984 | Round Rock, USA | ~ | ~ | ~ | ~ | ~ |

| HPE | 1939 | Houston, USA | ~ | ~ | ~ | ~ | ~ |

USA AI Servers and GPU Hardware Market Analysis

Growth Drivers

Hyperscale AI Model Training Infrastructure Expansion

Rapid proliferation of large language models, generative artificial intelligence systems, and multimodal deep learning architectures is driving unprecedented demand for GPU dense servers and high performance AI hardware clusters across hyperscale data center environments in the United States. Training frontier scale AI models requires thousands of interconnected GPUs with high bandwidth memory and ultra fast interconnect fabrics capable of parallel computation across massive datasets. Hyperscale cloud providers and AI technology firms are investing heavily in dedicated AI supercomputing clusters to support model development and commercial AI services. Continuous scaling of model parameters and dataset sizes increases compute intensity and hardware requirements per training cycle. AI training infrastructure also requires optimized cooling, power delivery, and rack level integration supporting extreme thermal densities. Semiconductor advances in GPU architectures and AI accelerators enable exponential growth in compute throughput. Demand from generative AI startups and enterprise AI initiatives further expands procurement volumes.

Enterprise AI Adoption and Inference Acceleration Demand

Broad adoption of artificial intelligence across enterprise sectors including finance, healthcare, retail, manufacturing, and cybersecurity is expanding demand for GPU accelerated servers and AI optimized hardware infrastructure for real time inference and analytics workloads within corporate data centers and cloud environments. Enterprises increasingly deploy machine learning models for automation, forecasting, personalization, and decision intelligence applications requiring continuous inference processing. Unlike centralized model training, inference workloads scale horizontally across business operations and customer facing systems, requiring widespread deployment of AI servers. Real time analytics in fraud detection, medical imaging, supply chain optimization, and intelligent automation rely on GPU or AI accelerator hardware. Integration of AI into enterprise software platforms drives embedded inference infrastructure adoption. Vendors offer turnkey AI server appliances simplifying enterprise deployment. Hybrid cloud architectures also require on premise inference hardware integrated with cloud training environments.

Market Challenges

GPU Supply Chain Constraints and Advanced Semiconductor Manufacturing Dependence

Production of advanced GPUs and AI accelerators relies on leading edge semiconductor fabrication processes, advanced packaging technologies, and high bandwidth memory components concentrated among a limited number of global suppliers, creating supply constraints and procurement bottlenecks affecting USA AI Servers and GPU Hardware market expansion. Cutting edge AI chips require sub five nanometer fabrication nodes and complex chiplet packaging techniques available only at a few semiconductor foundries. Demand surges from hyperscale cloud providers and AI firms often exceed manufacturing capacity, causing allocation constraints and extended delivery timelines for AI servers. High bandwidth memory supply is similarly concentrated among few memory manufacturers, limiting accelerator output scalability. Geopolitical export controls and technology restrictions also affect supply chains for advanced chips and interconnect components. Dependence on overseas fabrication for advanced nodes introduces strategic vulnerabilities.

Extreme Power Density and Data Center Infrastructure Limitations

AI server clusters and GPU dense racks operate at extremely high power and thermal densities far exceeding traditional data center infrastructure design thresholds, creating engineering, operational, and cost challenges for large scale deployment across hyperscale and enterprise environments in the United States. Modern GPU servers may consume tens of kilowatts per rack, requiring advanced liquid cooling, high capacity power distribution, and reinforced floor loading. Retrofitting existing data centers to support AI hardware often involves significant infrastructure upgrades including cooling systems, transformers, and electrical distribution. Power availability constraints in major data center hubs limit expansion of AI clusters. Energy efficiency and sustainability requirements further complicate deployment economics. Heat dissipation and reliability management are critical for continuous GPU operation. Infrastructure readiness gaps slow rollout of AI hardware capacity.

Opportunities

AI Specific Data Center Design and Liquid Cooling Ecosystem Growth

Escalating thermal densities and power requirements of next generation GPU and AI server clusters are catalyzing development of specialized AI optimized data center architectures incorporating liquid cooling, immersion cooling, high capacity power delivery, and modular rack scale integration across the United States infrastructure ecosystem. Dedicated AI data centers are being designed around GPU workloads rather than general purpose computing, enabling higher rack densities and improved energy efficiency. Liquid cooling technologies allow deployment of advanced accelerators operating beyond air cooling limits. Infrastructure vendors and utilities are investing in power distribution and cooling innovation tailored to AI hardware. Modular AI ready facilities enable faster deployment and scalability. Energy efficient cooling reduces operational costs and environmental impact. This emerging infrastructure segment expands capacity for AI server deployment.

Custom AI Accelerators and Domain Specific Hardware Development

Increasing specialization of artificial intelligence workloads across sectors such as healthcare imaging, financial analytics, autonomous systems, and cybersecurity is creating demand for domain specific AI accelerators and customized server hardware architectures optimized for particular model types and computational patterns within the United States technology ecosystem. While general purpose GPUs dominate current deployments, application specific integrated circuits and customized accelerators offer efficiency advantages for targeted workloads. Hyperscale cloud providers and semiconductor firms are developing proprietary AI chips tailored to internal services. Edge AI and inference appliances also require specialized hardware. Industry specific optimization improves performance per watt and cost efficiency. Custom accelerators expand diversity of AI hardware architectures. Vendors integrating specialized chips into AI servers address niche performance requirements.

Future Outlook

The USA AI Servers and GPU Hardware market is expected to expand significantly over the next five years as artificial intelligence adoption accelerates across industries and hyperscale infrastructure investment continues. Advancements in GPU architectures, AI accelerators, and liquid cooling technologies will enable higher density deployments. Government initiatives supporting semiconductor manufacturing and AI innovation will reinforce domestic supply chains. Demand from cloud providers, enterprises, and defense sectors will sustain large scale AI hardware procurement.

Major Players

- NVIDIA

- AMD

- Intel

- Dell Technologies

- Hewlett Packard Enterprise

- Supermicro

- Lenovo

- IBM

- Cisco Systems

- Quanta Computer

- Inspur Systems

- Amazon Web Services

- Microsoft

- Meta

Key Target Audience

- Cloud service providers

- Enterprise data center operators

- Telecom operators

- Government and defense agencies

- Semiconductor manufacturers

- Healthcare technology providers

- Investments and venture capitalist firms

- Government and regulatory bodies

Research Methodology

Step 1: Identification of Key Variables

Core variables including AI workload growth, hyperscale data center expansion, semiconductor innovation cycles, and enterprise AI adoption rates were identified. Hardware architectures, accelerator technologies, and deployment environments were mapped to understand structural drivers shaping USA AI Servers and GPU Hardware market dynamics.

Step 2: Market Analysis and Construction

Technology value chain across semiconductor fabrication, accelerator design, server manufacturing, and data center deployment was analyzed to construct segmentation and competitive structure. Procurement patterns from hyperscale, enterprise, and government sectors were evaluated to estimate market distribution and hardware demand.

Step 3: Hypothesis Validation and Expert Consultation

Findings were validated through consultations with semiconductor engineers, data center architects, AI infrastructure specialists, and enterprise IT leaders. Deployment constraints, performance requirements, and supply chain realities were assessed to refine USA AI Servers and GPU Hardware market assumptions.

Step 4: Research Synthesis and Final Output

Validated insights and quantitative indicators were synthesized into a structured market framework covering segmentation, competitive positioning, growth drivers, and future outlook. Cross industry AI adoption patterns and infrastructure evolution were incorporated to produce the final USA AI Servers and GPU Hardware market report.

- Executive Summary

- Research Methodology (Definitions, Scope, Industry Assumptions, Market Sizing Approach, Primary & Secondary Research Framework, Data Collection & Verification Protocol, Analytic Models & Forecast Methodology, Limitations & Research Validity Checks)

- Market Definition and Scope

- Value Chain & Stakeholder Ecosystem

- Regulatory / Certification Landscape

- Sector Dynamics Affecting Demand

- Growth Drivers

Generative AI training demand driving large scale GPU server deployments

Enterprise adoption of AI analytics increasing inference server refresh cycles

National research and defense computing programs expanding HPC capacity needs - Market Challenges

GPU supply constraints and allocation volatility impacting deployment timelines

Power density and cooling limitations increasing total infrastructure complexity

Software stack fragmentation and rapid GPU roadmap changes raising integration risk - Market Opportunities

Liquid cooling upgrades and high density retrofit programs across data centers

Sovereign and regulated AI compute builds for government and critical sectors

AI optimized networking and storage bundles paired with GPU server clusters - Trends

Shift toward rack scale architectures with high speed GPU interconnect fabrics

Rising adoption of direct to chip and liquid cooling for high TDP accelerators

Growth of consumption based GPU capacity models through managed providers - Government regulations

Export control and licensing rules influencing accelerator supply chains

Federal cybersecurity and supply chain risk requirements for critical IT procurement

Energy efficiency and data center environmental compliance at state and local levels - SWOT analysis

- Porters Five forces

- By Market Value, 2020-2025

- By Installed Units, 2020-2025

- By Average System Price, 2020-2025

- By System Complexity Tier, 2020-2025

- By System Type (In Value%)

GPU Accelerated AI Training Servers

AI Inference Edge Servers and Appliances

High Density GPU Server Racks and Pods

HPC AI Clusters and Supercomputing Nodes

Integrated AI Systems with High Speed Interconnects - By Platform Type (In Value%)

Hyperscale Cloud AI Infrastructure

Enterprise Private AI Data Centers

Colocation AI Ready Facilities

Government and Research HPC Facilities

Managed AI Infrastructure Services - By Fitment Type (In Value%)

Greenfield AI Data Center Builds

Retrofit of Existing Data Center Halls

Rack Scale Modular Deployments

On Premise Enterprise Installations

Hybrid Cloud Burst and On Demand Augmentation - By End User Segment (In Value%)

Cloud Service Providers and Hyperscalers

AI First Startups and Model Developers

Financial Services and FinTech

- Market Share Analysis

- Cross Comparison Parameters (GPU platform availability and roadmap, Server and rack scale design depth, Cooling and power density capability, Supply chain and lead time resilience, Software stack and validation ecosystem, Service and support coverage)

- SWOT Analysis of Key Competitors

- Pricing & Procurement Analysis

- Key Players

NVIDIA

AMD

Intel

Dell Technologies

Hewlett Packard Enterprise

Super Micro Computer

Lenovo

Cisco Systems

IBM

Microsoft

Amazon Web Services

Google

Oracle

Equinix

Schneider Electric

- Hyperscalers prioritize fastest time to deploy with standardized rack scale GPU designs

- Enterprises focus on inference capacity, governance, and secure on premises integration

- Research users demand high bandwidth interconnects and optimized parallel workloads

- Public sector buyers emphasize compliance, trusted supply chains, and long lifecycle support

- Forecast Market Value, 2026-2035

- Forecast Installed Units, 2026-2035

- Price Forecast by System Tier, 2026-2035

- Future Demand by Platform, 2026-2035