Market Overview

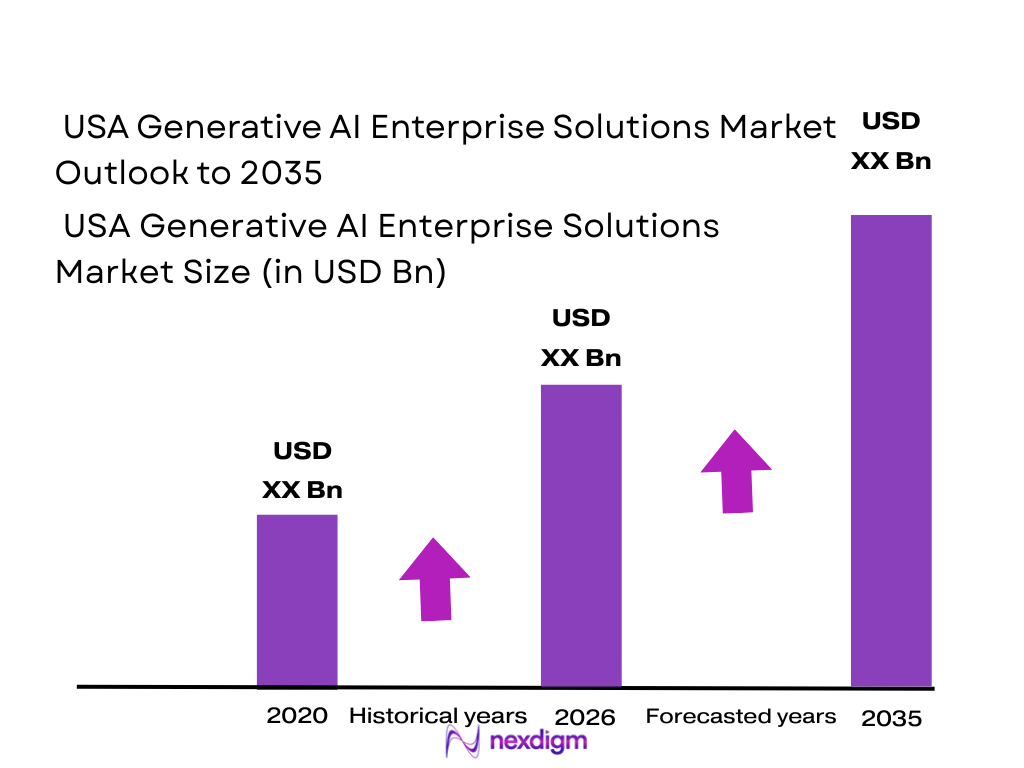

The USA Generative AI Enterprise Solutions Competitive Intelligence & Benchmarking Market is being shaped by a sharp jump in enterprise software adoption, model access spending, and implementation services. The broader U.S. generative AI market rose from USD ~ billion to USD ~ billion, while the U.S. enterprise generative AI layer reached USD 955.3 million as firms moved from pilot use into governed business deployment.

Within the U.S., the market is led by San Francisco Bay Area, Seattle, New York City, Northern Virginia, and Austin/San Jose corridors because these hubs combine frontier model labs, hyperscaler ecosystems, venture capital density, enterprise technology buyers, and AI-ready infrastructure. U.S. private AI investment reached USD 109.1 billion, and the U.S. widened its generative AI funding lead from USD 21.8 billion to USD 25.4 billion over China plus the EU and UK combined. Meanwhile, primary U.S. data-center supply rose to 6,922.6 MW, with vacancy at just 2.8 MW-equivalent percentage points, reinforcing why deployment gravitates to these technology corridors.

Market Segmentation

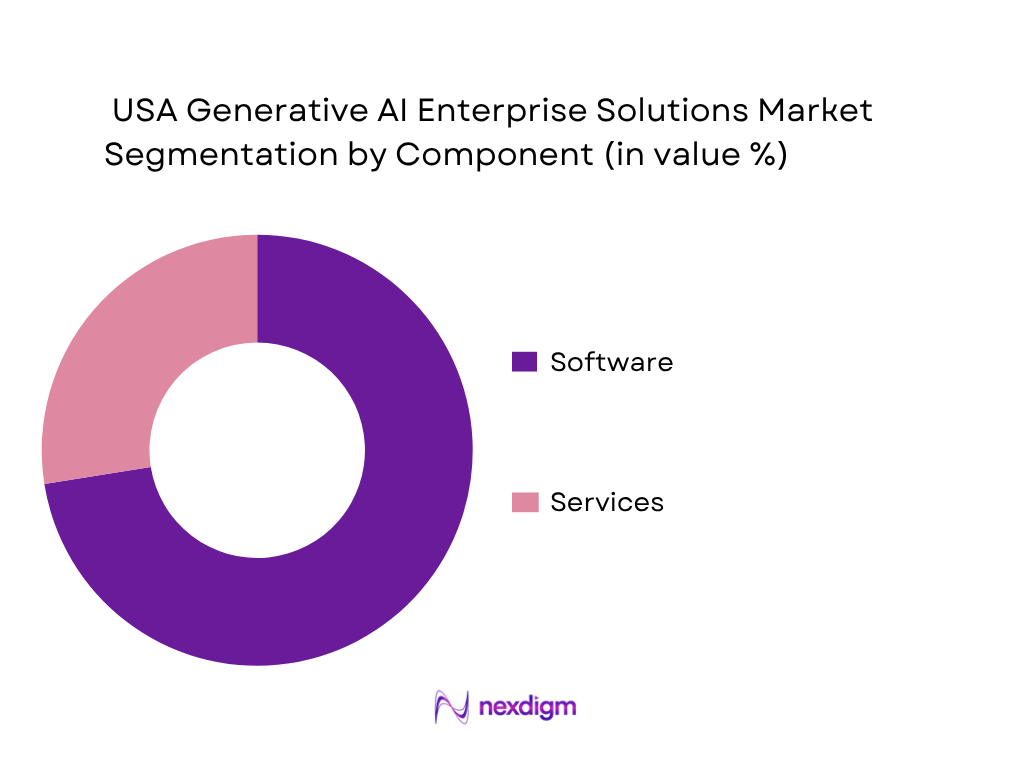

By Component

The market is segmented by component into software and services. Recently, software has held the dominant market share in the USA Generative AI Enterprise Solutions Competitive Intelligence & Benchmarking Market because enterprise buyers are prioritizing repeatable platforms over one-off experimentation. Spending is concentrating around copilots, enterprise chat layers, agent builders, model gateways, RAG tools, observability, and governance software that can be rolled out across business units. Vendors are also packaging more value into managed platforms, making software licenses and consumption charges the primary commercial layer, while services remain essential but secondary. This dominance is reinforced by the way enterprise buyers prefer integrated security, admin controls, and connectors rather than custom-built stacks from scratch. Grand View Research identifies software as the largest revenue-generating component in the U.S. enterprise generative AI market, with a share above 72.5%.

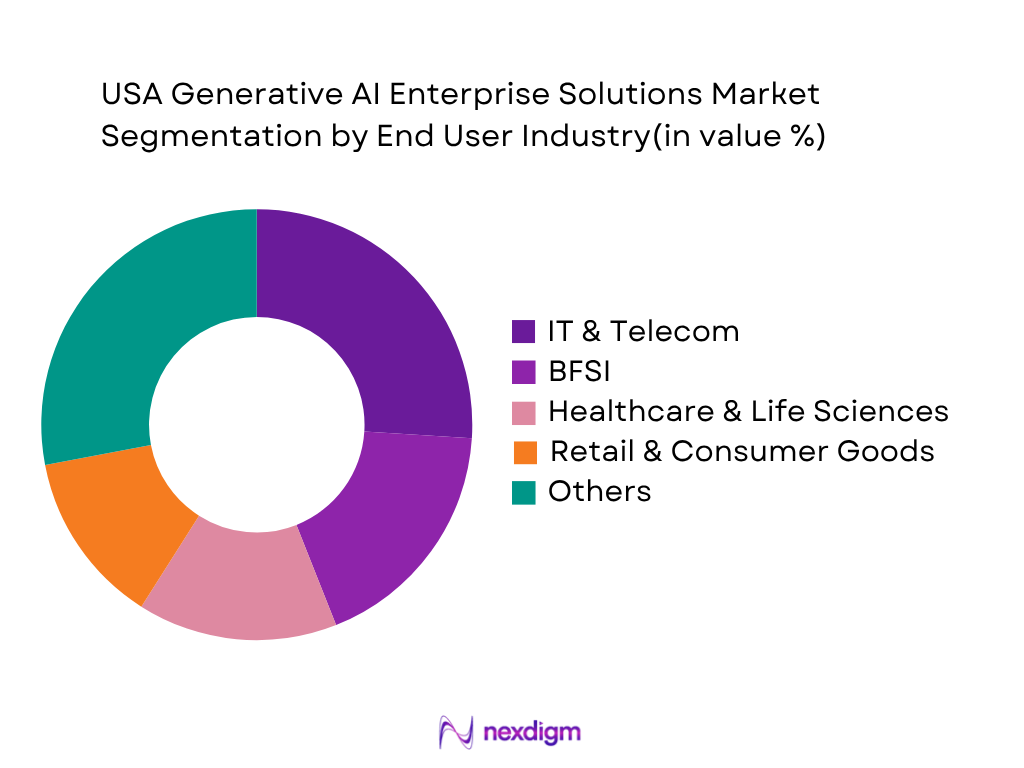

By End-User Industry

The market is segmented by end-user industry into IT & Telecom, BFSI, Healthcare & Life Sciences, Retail & Consumer Goods, Manufacturing, and Professional Services & Others. Recently, IT & Telecom has held the dominant market share in the USA Generative AI Enterprise Solutions Competitive Intelligence & Benchmarking Market because these firms already operate cloud-native infrastructure, large developer populations, API-heavy workflows, and strong data engineering teams. They are also the earliest adopters of copilots for software development, ticket resolution, code generation, search, and internal knowledge workflows. In addition, many telecom and software firms sit close to hyperscaler ecosystems, giving them faster access to production-grade model services and enterprise controls. Grand View identifies IT & Telecom as the leading end-use segment globally in enterprise generative AI, and U.S. enterprise adoption patterns continue to reflect that same structure, especially in engineering-led deployments.

Competitive Landscape

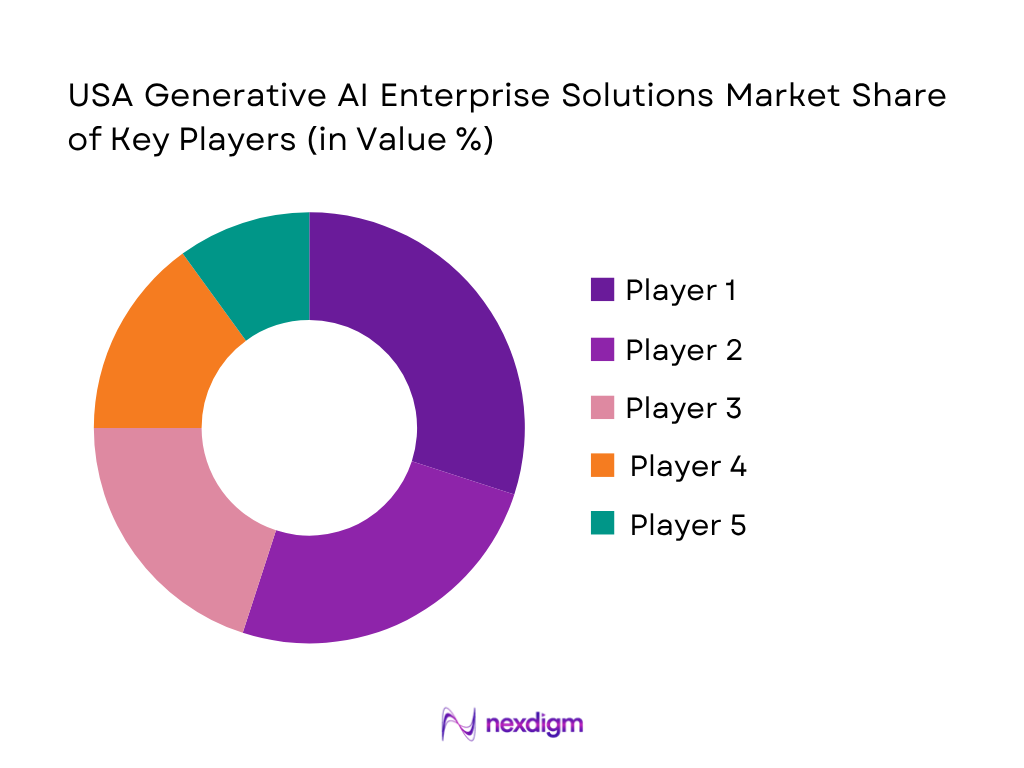

The USA Generative AI Enterprise Solutions Competitive Intelligence & Benchmarking Market is dominated by a concentrated group of hyperscaler-backed platforms and model-led enterprise vendors, especially Microsoft, AWS, Google Cloud, OpenAI, and Anthropic. This concentration reflects enterprise buying preferences for integrated model access, secure administration, enterprise connectors, governance controls, and scalable cloud infrastructure. Competition is intense, but the battleground is no longer just model quality; it now includes agent orchestration, RAG maturity, deployment flexibility, observability, and pricing control.

| Company | Establishment Year | Headquarters | Core Enterprise GenAI Offering | Model Strategy | Agent / RAG Capability | Deployment Orientation | Governance / Admin Controls | Pricing Motion |

| Microsoft | 1975 | Redmond, Washington | – | – | – | – | – | – |

| Amazon Web Services | 2006 | Seattle, Washington | – | – | – | – | – | – |

| Google Cloud | 1998* | Mountain View, California | – | – | – | – | – | – |

| OpenAI | 2015 | San Francisco, California | – | – | – | – | – | – |

| Anthropic | 2021 | San Francisco, California | – | – | – | – | – | – |

Competition Benchmarking Analysis

Competition benchmarking in this market increasingly centers on eight practical buying variables: model access breadth, agent orchestration depth, RAG quality, enterprise connector strength, deployment flexibility, governance maturity, observability, and cost control. Microsoft is strongest where organizations want productivity-suite integration and identity-led control. AWS is strongest for multi-model flexibility and modular agent infrastructure. Google Cloud performs well in enterprise search, agent builder functionality, and model-development workflows. OpenAI remains highly influential in enterprise chat and API-led deployments, while Anthropic is especially strong in coding, long-context reasoning, and trusted enterprise knowledge access. The benchmark trend is clear: vendors with only a strong model are no longer enough; enterprise buyers increasingly favor platforms that combine secure deployment, workflow integration, governance, and measurable total-cost discipline.

USA Generative AI Enterprise Solutions Competitive Intelligence & Benchmarking Market Analysis

Growth Drivers

Expansion of Enterprise Copilots into Daily Productivity Workflows

Enterprise copilots are moving from limited experimentation into mainstream work because U.S. business AI adoption is climbing in measurable terms. The U.S. Census Bureau found AI use in businesses producing goods or services rose from 3.7 to 5.4 between September and late February, with expected use reaching about 6.6 by early fall. By May, overall AI use was close to 10, while large businesses with 250 or more employees reported 11 use versus 7 among firms with 1–4 employees. This is happening in a macro environment that still supports software rollouts: U.S. GDP reached USD 28.75 trillion, GDP per capita reached USD 84,534, and national earnings rose 5.5, giving enterprises both the scale and income base to normalize copilot deployment inside office, coding, and service workflows.

Shift from Proof-of-Concept to Scaled Production Deployments

The market is being pushed forward by enterprises moving beyond isolated proofs of concept into broader operating models. The strongest evidence is not only rising business AI use from 5.4 toward 6.6, but also the breadth of functions already tied to AI: 2.5 of firms reported marketing automation use, 1.9 used virtual agents or chatbots, 1.7 used natural language processing, and 1.5 each used text analytics and data analytics. Production scaling also aligns with broader business capacity: U.S. real GDP increased 2.8, corporate profits increased by USD 281.3 billion, and current-dollar personal income increased 5.4. Those figures matter for this market because scaled deployment requires firms to commit budget to integration, governance, and recurring usage rather than keeping AI confined to innovation teams.

Market Challenges

Unclear ROI at Scale Across Departments

The biggest scaling challenge is that enterprise AI adoption is still rising from a relatively low base, making it difficult to prove return on investment uniformly across all departments. U.S. businesses using AI reached 5.4 by late February and were expected at 6.6 by early fall, showing momentum but not yet universal penetration. The labor and cost backdrop also raises the bar for proof: private-industry compensation increased 3.6, wages increased 3.8, and the unemployment rate averaged 4.0. That means enterprises must show whether generative AI actually reduces effort, increases output, or shortens cycle times enough to justify platform-wide expansion. Without department-level business cases, many firms remain stuck between localized wins and enterprise rollouts, especially when budget owners want measurable gains beyond productivity anecdotes.

Hallucination, Reliability, and Low-Confidence Output Risks

Hallucination and reliability remain core barriers because the highest-growth enterprise use cases are language and decision-adjacent tasks where errors can damage trust quickly. Census data show businesses are already using natural language processing at 1.7, text analytics at 1.5, large language models at 1.0, and decision-making systems at 0.5. As those tools move into enterprise documents, customer interactions, and knowledge retrieval, the consequences of low-confidence output rise. That is why NIST issued the Generative AI Profile and SP 800-218A on July 26, emphasizing structured risk management and secure development practices for generative AI systems. The problem is not abstract: the FBI recorded USD 16.6 billion in reported cybercrime losses, showing how costly misleading, manipulated, or poorly controlled digital content can become once it enters operational workflows.

Opportunities

AI Agents for Back-Office and Cross-System Execution

A major opportunity lies in back-office agents because current U.S. business usage already shows demand for repetitive, workflow-heavy tasks rather than only front-end content generation. Census data show AI is being used for marketing automation (2.5), virtual agents (1.9), data analytics (1.5), decision-making systems (0.5), and robotic process automation (0.3), with much higher expected usage among future adopters. These are precisely the building blocks for cross-system execution in finance, procurement, service operations, and internal support. The macro case is strong: U.S. GDP stood at USD 28.75 trillion, personal income increased 5.4, and earnings increased 5.5. In a large economy with rising labor costs and heavy administrative workload, vendors that can turn copilots into execution agents have room to capture enterprise demand without needing speculative future assumptions.

Industry-Specific Copilots for Regulated Workflows

Regulated workflows are a particularly attractive opportunity because enterprise buyers increasingly want AI that understands domain language, internal controls, and decision boundaries. The Information sector already reports current AI use of 18.1, and employment-weighted current use there is 22, showing that highly digital industries adopt AI faster when use cases are clearly defined. At the same time, NIST released the Generative AI Profile and SP 800-218A in July, providing a stronger framework for organizations that need trust, testing, and secure development. The FBI’s reported USD 16.6 billion in cybercrime losses also makes unsupervised deployment less acceptable in sensitive environments. This opens opportunity for regulated-workflow copilots in finance, healthcare, legal, public-sector contracting, and cybersecurity—segments where precision, traceability, and policy controls matter more than generic model novelty.

Future Outlook

The market is entering a scale-up phase in which enterprise budgets are likely to shift from experimentation to standardized platform adoption. Growth will be supported by broader rollout of copilots, deeper use of agentic AI, more spending on enterprise search and RAG, and rising demand for governance software that can manage risk across models and workflows. Over the forecast period, the strongest expansion is expected in software engineering, customer operations, internal productivity, and regulated knowledge workflows. For long-range planning, this report uses a ~% CAGR benchmark for the 2026–2035 period, aligned with published enterprise generative AI forecasts through 2035.

Major Players

- Microsoft

- Amazon Web Services

- Google Cloud

- OpenAI

- Anthropic

- Salesforce

- IBM

- Oracle

- SAP

- Databricks

- Snowflake

- ServiceNow

- NVIDIA

- Cohere

- Palantir

Key Target Audience

- Chief AI Officers, CIO offices, and enterprise transformation leaders

- Chief Data Officers and enterprise data platform teams

- Software and SaaS product strategy teams

- Cloud infrastructure and platform engineering leaders

- Cybersecurity, model risk, and responsible AI teams

- Investments and venture capitalist firms

- Government and regulatory bodies (NIST, FTC, CISA, SEC, U.S. Department of Commerce)

- Corporate development, M&A, and strategic partnership teams

Research Methodology

Step 1: Identification of Key Variables

The initial phase involves building an ecosystem map of the USA Generative AI Enterprise Solutions Competitive Intelligence & Benchmarking Market. This includes frontier model vendors, hyperscalers, enterprise software firms, orchestration platforms, governance vendors, and enterprise buyers. The goal is to identify the variables that most influence market performance, such as software mix, deployment model, buyer function, end-use concentration, and pricing structure.

Step 2: Market Analysis and Construction

In this phase, historical market data is compiled from credible published sources, especially U.S. and global enterprise generative AI datasets, vendor product disclosures, and adjacent enterprise AI benchmarks. The market is then constructed using a bottom-up logic that connects platform revenues, enterprise deployment patterns, and use-case monetization. This helps isolate the enterprise solutions layer from the broader generative AI market.

Step 3: Hypothesis Validation and Expert Consultation

Working hypotheses are created around component dominance, industry demand concentration, buying behavior, and long-term growth. These are validated through enterprise adoption studies, AI market trackers, vendor documentation, and policy frameworks that reflect how organizations are scaling generative AI in production. This stage is critical for separating pilot activity from true enterprise revenue realization.

Step 4: Research Synthesis and Final Output

The final phase synthesizes all findings into a market-specific report structure covering overview, segmentation, competition, benchmarking, future outlook, and demand-side relevance. Quantitative figures are retained only where they can be anchored to published sources, while segment-share allocations not directly disclosed in public datasets are clearly labeled as analyst estimates. This ensures the report remains both decision-useful and transparent.

- Executive Summary

- Research Methodology (Market Definitions, Solution Boundary Mapping, Enterprise Solution Inclusion Criteria, Benchmarking Framework, Competitive Intelligence Model, Demand-Side Validation, Supply-Side Validation, Bottom-Up Revenue Mapping, Top-Down Market Sizing, Use-Case Adoption Weighting, Pricing Normalization Logic, Limitations and Assumptions)

- Market Definition and Scope

- Market Evolution from Foundation Models to Enterprise Copilots and Agentic AI

- Market Architecture Overview

- Enterprise Buying Journey and Evaluation Funnel

- Value Chain Analysis

- Ecosystem Mapping

- Timeline of Major Platform and Product Launches

- Business Cycle and Monetization Logic

- Growth Drivers

Expansion of Enterprise Copilots into Daily Productivity Workflows

Shift from Proof-of-Concept to Scaled Production Deployments

Rising Demand for Agentic AI and Workflow Automation

Multi-Model Access and Vendor-Neutral Orchestration Demand

Growing Importance of Grounded Enterprise Search and RAG

Increasing Need for Governance, Evaluation, and Auditability

Cost Optimization Through Prompt Caching, Routing, and Tiered Inference

Acceleration of Domain-Specific and Industry Workflow Assistants - Market Challenges

Unclear ROI at Scale Across Departments

Hallucination, Reliability, and Low-Confidence Output Risks

Unstructured Data Readiness and Connector Complexity

Security, Privacy, and Data Residency Concerns

Vendor Lock-In and Closed Ecosystem Dependence

Cost Volatility in Usage-Based Commercial Models

Integration Difficulty with Legacy Enterprise Applications

Talent Shortage in LLMOps, Evaluation, and Responsible AI - Opportunities

AI Agents for Back-Office and Cross-System Execution

Industry-Specific Copilots for Regulated Workflows

Evaluation-Led Competitive Differentiation

Governance Platforms as a Strategic Control Layer

AI Gateway, Policy Enforcement, and Model Routing Layers

Smaller Models, Edge Inference, and Cost-Efficient Workloads

Enterprise Knowledge Graph plus RAG Convergence

Partner-Led Implementation and Managed GenAI Services - Market Trends

From Chat Interfaces to Embedded Workflow AI

Rise of Agentic AI Platforms and Multi-Agent Architectures

Evaluation, Observability, and Guardrails Becoming Core Buying Criteria

Platform-Native AI Gaining Share over Point Solutions

Stronger Demand for US-Only Processing and Controlled Deployment

Prompt Caching, Batch Inference, and Tiered Service Models for TCO Control

Convergence of Data Platform, Search, and GenAI Application Layers

Expansion of Role-Based AI Assistants Across Departments - Regulatory and Governance Landscape

NIST AI RMF and Generative AI Profile Relevance

Sector-Specific Compliance Considerations

Model Risk Management and Human-in-the-Loop Controls

Third-Party Model Governance and Procurement Review

Responsible AI Policy and Enterprise Guardrail Adoption - Porter’s Five Forces (Buyer Power, Vendor Concentration, Substitute Risk, Entry Barriers, Ecosystem Rivalry)

- SWOT Analysis (Capability Breadth, Ecosystem Strength, Cost Structure, Trust Layer, Execution Risks, Scalability)

- PESTLE Analysis (Policy, Capital Allocation, Skills Availability, Compute Economics, Legal Exposure, Sustainability of AI Infrastructure)

- Stakeholder Ecosystem (Model Providers, Cloud Platforms, Data Platforms, Enterprise ISVs, SIs, Security Vendors, Buyers, Regulators)

- USA Generative AI Enterprise Solutions Competition Benchmarking Analysis

- Benchmarking Framework Design (Evaluation Methodology, Scoring Weightages, Enterprise Use-Case Mapping, Normalization of Metrics, Cross-Vendor Comparability Framework)

- Platform Capability Benchmarking (End-to-End AI Stack Coverage, Copilot Capabilities, Agent Orchestration Depth, Workflow Automation Capability, API and SDK Maturity)

- Foundation Model Benchmarking (Model Accuracy, Context Window Size, Latency, Cost per Token, Multimodal Capability, Fine-Tuning Support, Model Availability Strategy)

- Agentic AI Benchmarking (Multi-Agent Coordination, Tool Use Capability, Memory Persistence, Task Autonomy, Workflow Integration, Reliability under Complex Tasks)

- RAG and Enterprise Search Benchmarking (Retrieval Accuracy, Grounding Quality, Vector Database Integration, Data Connector Breadth, Latency Performance, Hallucination Reduction Efficiency)

- Enterprise Integration Benchmarking (ERP/CRM Integration, Data Warehouse Integration, SaaS Application Connectivity, API Ecosystem, Low-Code/No-Code Enablement)

- Governance, Security, and Compliance Benchmarking (Access Control Mechanisms, Data Privacy Controls, Auditability, Model Monitoring, Bias Detection, Regulatory Compliance Readiness)

- Deployment and Infrastructure Benchmarking (Cloud Availability, Hybrid Deployment Support, On-Prem Capabilities, Sovereign Deployment Options, Scalability, Compute Optimization)

- Pricing and Cost Efficiency Benchmarking (Token Pricing Efficiency, Subscription vs Consumption Mix, Cost Predictability, Optimization Tools, Total Cost of Ownership)

- Observability and Evaluation Benchmarking (Model Evaluation Frameworks, Prompt Testing Tools, Performance Monitoring, Logging and Traceability, Human-in-the-Loop Support)

- Developer and Enterprise UX Benchmarking (Ease of Implementation, Developer Tooling, Documentation Quality, Time-to-Deployment, Learning Curve)

- Ecosystem and Partner Strength Benchmarking (System Integrator Ecosystem, Marketplace Strength, Third-Party Extensions, Developer Community Size, Strategic Partnerships)

- Industry-Specific Solution Benchmarking (Vertical AI Solutions, Domain-Specific Models, Pre-Built Industry Agents, Compliance-Ready Templates)

- Innovation and Product Velocity Benchmarking (Frequency of Model Releases, Feature Rollouts, Agent Framework Evolution, Enterprise Feature Expansion)

- Vendor Lock-In and Flexibility Benchmarking (Multi-Model Support, Open vs Closed Ecosystem, Data Portability, Switching Cost, Interoperability)

- Time-to-Production Benchmarking (Implementation Time, Deployment Complexity, PoC-to-Production Conversion Rate, Integration Time)

- ROI and Business Impact Benchmarking (Productivity Gains, Cost Savings, Revenue Impact, Workflow Automation Efficiency, Adoption Rate Across Departments)

- By Revenue, 2020-2025

- By Active Enterprise Deployments, 2020-2025

- By Paid Seats / Licensed Users, 2020-2025

- By API and Consumption Spend, 2020-2025

- By Average Contract Value, 2020-2025

- By Average Revenue per Enterprise Account, 2020-2025

- By Solution Type (In Revenue %)

Enterprise AI Assistants / Copilots

Agent Development and Orchestration Platforms

Retrieval-Augmented Generation and Enterprise Search Solutions

Model Access and Inference Platforms

AI Governance, Guardrails, and Risk Management Platforms

AI Development Platforms and LLMOps / MLOps Layers

AI-Enabled Analytics and Data Intelligence Solutions - By Deployment Model (In Revenue %)

Public Cloud Managed Deployment

Private Cloud / Dedicated Instance

Hybrid Deployment

On-Premise / Self-Hosted Deployment

Sovereign / Restricted Environment Deployment - By Model Strategy (In Revenue %)

Single-Model Standardization

Multi-Model Routing

Open-Weight Model Strategy

Closed-Model Enterprise Stack

Fine-Tuned / Domain-Adapted Model Strategy

RAG-First, No Fine-Tuning Strategy - By Enterprise Function (In Revenue %)

Customer Service and Contact Center

Software Engineering and IT Operations

Knowledge Management and Enterprise Search

Sales, Marketing, and Revenue Operations

HR, Learning, and Internal Productivity

Finance, Procurement, and Legal Operations

Cybersecurity and Risk Operations

Industry Workflow Automation - By Industry Vertical (In Revenue %)

BFSI

Healthcare and Life Sciences

Retail and Consumer Goods

Manufacturing and Industrial

Technology, Media, and Telecom

Energy and Utilities

Public Sector and Government Contractors

Logistics and Transportation - By Enterprise Size (In Revenue %)

Large Enterprises

Upper Mid-Market Enterprises

Regulated Mid-Market Enterprises

Digital-Native Enterprises - By Buyer Type (In Revenue %)

CIO / CTO Office

Business Function Leaders

Data and AI Center of Excellence

Security, Risk, and Compliance Teams

Procurement / Strategic Sourcing - By Pricing Model (In Revenue %)

Per-User Subscription

Token / Consumption-Based Pricing

Credit-Based / Action-Based Pricing

Platform License plus Usage

Outcome / Project-Based Commercial Model

- Market Share of Major Players on the Basis of Revenue and Enterprise Deployment Base

- Competitive Positioning Matrix (Platform-Native Leaders, Model-Led Challengers, Data-Layer Specialists, Workflow AI Specialists, Governance-Led Specialists)

- Cross Comparison Parameters (Foundation Model Access Strategy, Agent Orchestration Depth, RAG / Enterprise Search Capability, Enterprise Connector Breadth, Governance and Policy Control Layer, Deployment Flexibility, Pricing and Cost-Control Mechanisms, Observability / Evaluation and Auditability)

- Benchmarking of Major Players by Use Case Fit

- Benchmarking by Deployment Readiness and Security Posture

- Benchmarking by Developer Experience and Admin Controls

- Benchmarking by TCO and Commercial Flexibility

- Benchmarking by Industry Solution Depth

- Pricing Analysis (Per Seat, Per Token, Per Credit, Dedicated Capacity, Hybrid Commercial Models)

- Strategic Developments (Product Launches, Partnerships, Acquisitions, Model Releases, Agent Framework Launches, Ecosystem Expansion)

Detailed Profiles of Major Companies

Microsoft

Google Cloud

Amazon Web Services

OpenAI

Anthropic

Salesforce

IBM

Oracle

SAP

Databricks

Snowflake

ServiceNow

NVIDIA

Cohere

Palantir

- Enterprise Demand by Use Case Cluster

- Budget Allocation by AI Layer

- Build vs Buy vs Partner Preference Analysis

- Decision-Making Unit and Purchase Journey

- Evaluation Criteria Used by Enterprise Buyers

- Pain Point Analysis by Buyer Persona

- Implementation Maturity by Enterprise Type

- Renewal, Expansion, and Vendor Consolidation Behavior

- By Revenue, 2026-2035

- By Active Enterprise Deployments, 2026-2035

- By Paid Seats / Licensed Users, 2026-2035

- By API and Consumption Spend, 2026-2035

- By Average Contract Value, 2026-2035

- By Average Revenue per Enterprise Account, 2026-2035